Last night, the wife and I brewed up some nice Medicare mimosas (that’s orange Metamucil with a pinch of MiraLAX for those who don’t know, yet) and sat down to watch a documentary on the desktop. It was my night to choose, so we didn’t watch the National Geographic folks anthropomorphizing the animal of the week. Instead, we watched something interesting on PBS. It’s an old series imaginatively entitled “The Brain”. It’s really very good, except for one thing. Within the first few minutes, the narrator says the E word (emergence), and he just keeps saying it.

I’m prone to let this sort of thing go. Saying a property emerges in the subject of a micro structural description is often a means of stepping over a steaming pile of metaphysics in the path between discussion of the properties of an object’s components, and the properties of the object itself. I can forgive the use of shorthand..

The narrator initially uses this shorthand meaning of emergence. But as things go along, it becomes clear that he also endorses weak emergence. Then he offhandedly states that colors exist in the mind and not in reality, which indicates that he really does have things the wrong way around.

In defense of the narrator, he still isn’t advocating for strong emergence. Strong emergence is the idea that once some threshold condition is met among components of an object, the group of components comprising the object acquires a new property which then takes over the behavior of the object as a whole, and by extension, that object’s components.

This magical event effectively erases, at least temporarily, the properties of the object’s components. While they remain pieces of the whole, they participate in events according to the dictates of the new property. It is only when they fall off the bus, either accidentally, or via our purposeful examination, that they reacquire their individual properties once again.

For instance, neurons generate electrical impulses, regulate their membrane potentials, and secrete paracrine signals until they are gathered in a certain number and arranged in a certain pattern, at which point they exceed the threshold for becoming a mind and begin to do things like experience, think, and remember. As long as we look at the collection of neurons gathered in the threshold number and arrangement, we will see them exemplifying mental properties. If we pull one of the neurons out of the brain or touch a subthreshold group of them with an electrode probe, we see them revert to exemplifying neuronal properties.

Weak emergence differs from the claims above in that it takes those claims to be metaphorical. When we get to the threshold state for the components of an object, we don’t get an actual, new, causal force out of that last brick added to the structure. Instead, it just becomes more convenient to speak of the object as if it had developed such a new property.

In the case of the mind, that would mean that the threshold number and arrangement of neurons simply becomes too difficult to manage descriptively. It makes sense to begin to use mental terminology to describe their collective behavior rather than trying to persist in using neurologic terminology.

In the case of both strong and weak emergence, we generate additional mysteries to solve, and those mysteries appear to be unsolvable. We have no account of how or why threshold conditions are established or met. We have no idea how properties flip on and off in the components and in the designated objects composed by those subunits. The difference between the two positions is that, in weak emergence we have the above difficulties in explaining a metaphor rather than a mechanism.

The root problem however, is not flipping properties. The root problem is the non-relational account inherent in the treatment of objects and their components. We get another glimpse of this inverted view when the narrator of “The Brain” describes colors as constructs of the brain which are absent in reality. If we take the implied structure seriously, then there’s nothing to save neurons from a similar fate. The only difference might be that we have examples of people who live without colors, but no examples of people who live without neurons. However, we do have examples of people who seem happy to live without minds, from solipsists to eliminatrivists.

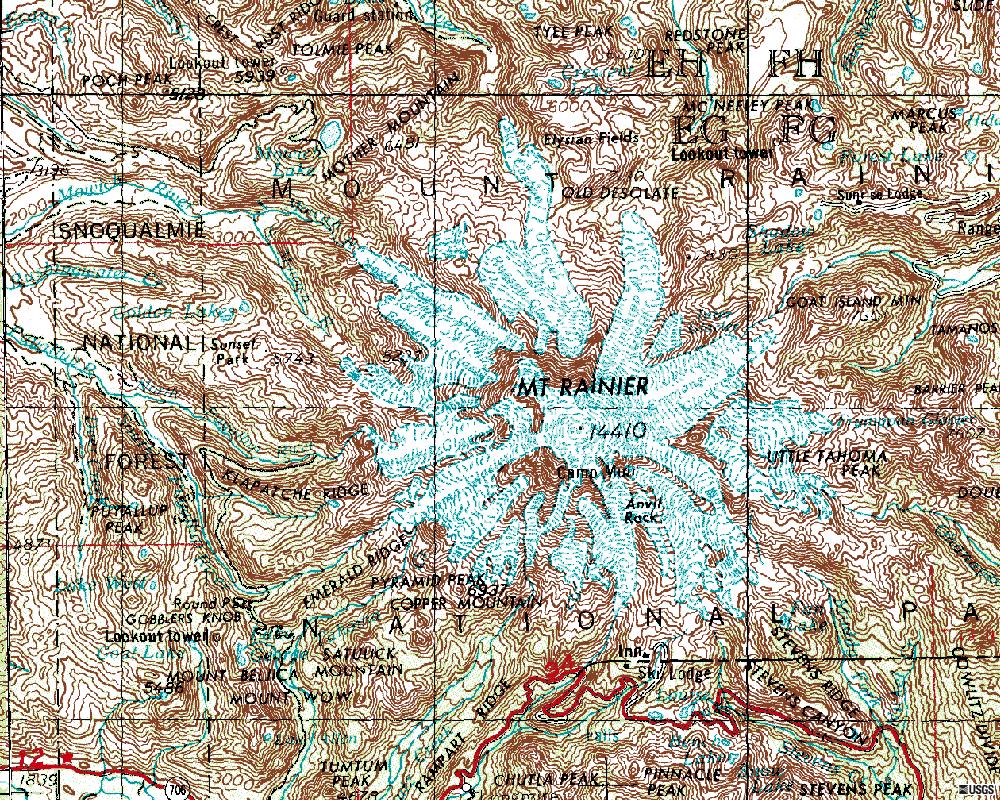

To clarify, minds are explained by brains which are explained by neurons which are explained by genes. Colors are explained by retinal pigment, neurons, cone cells, and wavelengths of light. The explanations begin with the object in question, and proceed down to the microstructure.

The microstructure doesn’t represent the object like the pieces of a jigsaw puzzle or a pile of little homunculi. Instead, the components provide a history of relationships and record of events situating the object of examination in the causal web of space and time.

A couple of examples, in the interest of de-spookifying the statement above. First, take the illustration that the documentary offers for neuronal activity generating consciousness. Our narrator gives the example of the unconscious brain during sleep. In deep sleep, the electrical activity generates a rudimentary waveform on EEG. In REM sleep, when the brain is ostensibly conscious, as well as during wakefulness, the EEG tracing shows a complex waveform. He compares this circumstance to a group of drummers, each initially drumming to their own rhythm. As they listen to each other and begin to coordinate their beats, music emerges.

If the implicit claim really held, John Coltrane wasn’t doing much of anything that any of the rest of us couldn’t do as long as we knew how to work the reed on a saxophone. The drummers can improvise a musical outcome because they understand the object (music) and the components’ (speed and timing of stick strikes on the drum head) relationship to the object composed. That relationship is a series of events involving hearing, drum making skills, proprioceptive experiences and the response of previous brains to frequencies of stick strikes on drum heads. This explains why we can’t play jazz like John Coltrane. We speak of him improvising, but he improvised off of an explanation that situated him in a most musical zone.

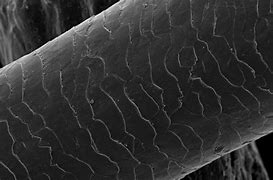

More to the point, we can look at the example of neurons and minds itself. Fully developed neurons can’t be placed in a bag, (to borrow from a more gruesome tale offered up by a substance dualist – they are disgusting people), and shaken up to make a brain, much less a mind. The neurons have to go through the developmental process to provide an adequate explanation for the supervening mind. By developmental process, I mean to say the whole history of neuronal development from primordial cells emitting chemical signals in response to changes in membrane polarization to cell migration during gestation, to sensory integration during early childhood. The neurons bear the history of events identified with mental events. The state of affairs is the same as the status of drumsticks and drum heads and drummers regarding music. Those components explain the music because they offer a narrative of events which situates music in the course of events overall. And those specific components pertain to the tune of the day because those components have specific, music related events explaining the components in their turn.

So that’s why I don’t like the E word. When it comes to minds, brains, and neurons, it perpetuates a mystery where there should be none. Worse, it dumbs things down generally, because it substitutes new properties for deep histories.

Problems remain. Dualisms will survive. The hard problem will still wake people in a cold sweat at night (go back to sleep, it’s epiphenomenal). People will still use their minds to insist that we don’t need minds.

Getting rid of the E word solve much.

But it’s a step in the right direction.